Most A/B tests fail. Not because teams lack tools or ambition, but because they test the wrong things for the wrong reasons. When hypotheses are built on gut feeling rather than real user behavior, even a perfectly executed experiment can't save a flawed idea. AI is changing that equation. Not by replacing the judgment of experienced optimizers, but by giving every team the behavioral evidence they need to test smarter, prioritize faster, and learn more from every experiment they run.

This guide explores how AI is reshaping A/B testing from end to end, and how platforms like Contentsquare, with Journey Analysis, Zoning Analysis, Session Replay, and Sense (Contentsquare's AI copilot), help teams move from intuition-based guesses to evidence-backed experiments, faster than ever.

The evolution of A/B testing

You know the fundamental value of A/B testing. It is the bedrock of data-driven decision-making. It lets you compare 2 versions of a webpage, app feature, landing page, or marketing campaign to see which performs better.

Traditional A/B testing involves splitting your audience into 2 groups. One group sees the original control version, while the other sees the variation treatment. You then measure key metrics like conversion rates, engagement, or user experience outcomes to determine the superior version. This method helps you optimize everything from button colors to headline copy, product page layouts, and campaign messaging. It provides clear, actionable insights based on observed user behavior.

However, manual A/B testing faces significant challenges in a fast-paced environment. Running tests sequentially means waiting for statistical significance, which takes days or weeks and slows down your optimization cycle. You typically test only a few variables at a time, making multivariate testing exponentially complex and data-intensive. Manual segmentation relies on broad categories, which misses nuanced user behaviors and evolving behavior patterns. This effectively treats diverse users as one monolithic group. Hypotheses are often based on intuition or past successes, which might not align with current user needs or market trends.

This is where Contentsquare's Experience Analytics suite adds critical context. Journey Analysis reveals exactly how users navigate your site page by page, from entry to exit, surfacing where they drop off, loop back, or abandon before converting.

Zoning Analysis shows how users interact with individual page elements, such as buttons, banners, and CTAs, so teams can build experiments based on what users actually notice and engage with, rather than assumptions alone.Interpreting complex results from multivariate tests requires significant human effort and statistical expertise. Traditional testing also struggles to deliver highly personalized experiences at scale because it is not designed to adapt to individual user journeys in real time.

What is AI-powered A/B testing

AI-powered A/B testing integrates machine learning algorithms and predictive analytics into the entire testing process. It intelligently learns from user interactions, predicts optimal outcomes, and automates manual tasks associated with traditional testing. Instead of relying only on static rules, modern A/B testing tools use AI to detect patterns, prioritize opportunities, and optimize experiments continuously. In some cases, an embedded AI assistant helps teams interpret results, generate recommendations, and streamline decision-making. Contentsquare's Sense does exactly this: it acts as an on-demand analyst, answering natural-language questions like "Where are high-intent users dropping off?" and instantly surfacing funnels, segments, and journey data to guide your next experiment.

AI fundamentally transforms A/B testing by introducing 3 core principles:

Automation handles repetitive tasks from data collection to statistical analysis, freeing your team for strategic work

Personalization identifies subtle patterns to deliver the most effective content to each individual user

Dynamic adaptation adjusts tests based on real-time performance without manual intervention

These capabilities make experimentation more responsive, more scalable, and more closely aligned with real customer intent.

Optimized resource allocation

Your time, budget, and expertise are valuable. AI helps ensure they are used where they matter most. AI predicts potential outcomes to help you prioritize the hypotheses and experiments most likely to yield significant results. It automatically reallocates traffic away from poorly performing variants early in a test to minimize lost conversions and wasted resources.

Impact Quantification takes this a step further by translating UX blockers directly into revenue terms. Instead of debating which friction point to fix first, teams can see exactly how much each issue costs in missed conversions, and forecast the potential gain if it is resolved. This turns resource allocation from a debate into a data-driven decision.AI also scans your existing data to identify unusual drops in conversions, unexpected user paths, or areas of high friction. Contentsquare's AI-Powered Alerts surface unexpected behavioral changes automatically, such as a sudden spike in exits or a drop in engagement on a key page, enabling fast response before test results are distorted.

Sense Chat can then be prompted to pinpoint exactly where users are abandoning, segment results by audience, and calculate the potential revenue impact. Combined with Voice of Customer (VoC) capabilities, such as AI-powered surveys that collect and analyze customer feedback with sentiment analysis, teams can validate where friction appears in the customer journey and prioritize the experiments most likely to improve conversion and user experience. Natural language analysis of survey responses uncovers pain points that quantitative data alone cannot reveal. In mature experimentation programs, this can also improve internal workflows, since teams spend less time manually sorting opportunities and more time acting on high-impact insights.

Test design and variant creation

AI actively assists in the creative process. Generative AI tools rapidly produce multiple headline variations, call-to-action button options, or basic layout designs based on your specifications and brand guidelines. Contentsquare's Zoning Analysis and Sense Analyst are particularly powerful here. Sense Analyst automatically scans a page, creates multiple zoning analyses, takes screenshots, and identifies each zone. It then delivers specific UX recommendations to improve page performance, telling you which zones are underperforming, which CTAs are getting ignored, and which layout changes are most likely to lift conversions.

For example, Heatmaps can show whether users are engaging with a pricing section, missing a key call to action, or focusing on elements that are lower value for conversion. Teams can use those insights to create stronger A/B test variants, such as changing layout hierarchy, repositioning buttons, or refining on-page messaging.

![[Visual] Heatmaps-context](http://images.ctfassets.net/gwbpo1m641r7/2fsiHvsqUU7VAYC7JV8f35/8e6f253ada08724971cf00862bd22fde/Heatmaps-context.png?w=3840&q=100&fit=fill&fm=avif)

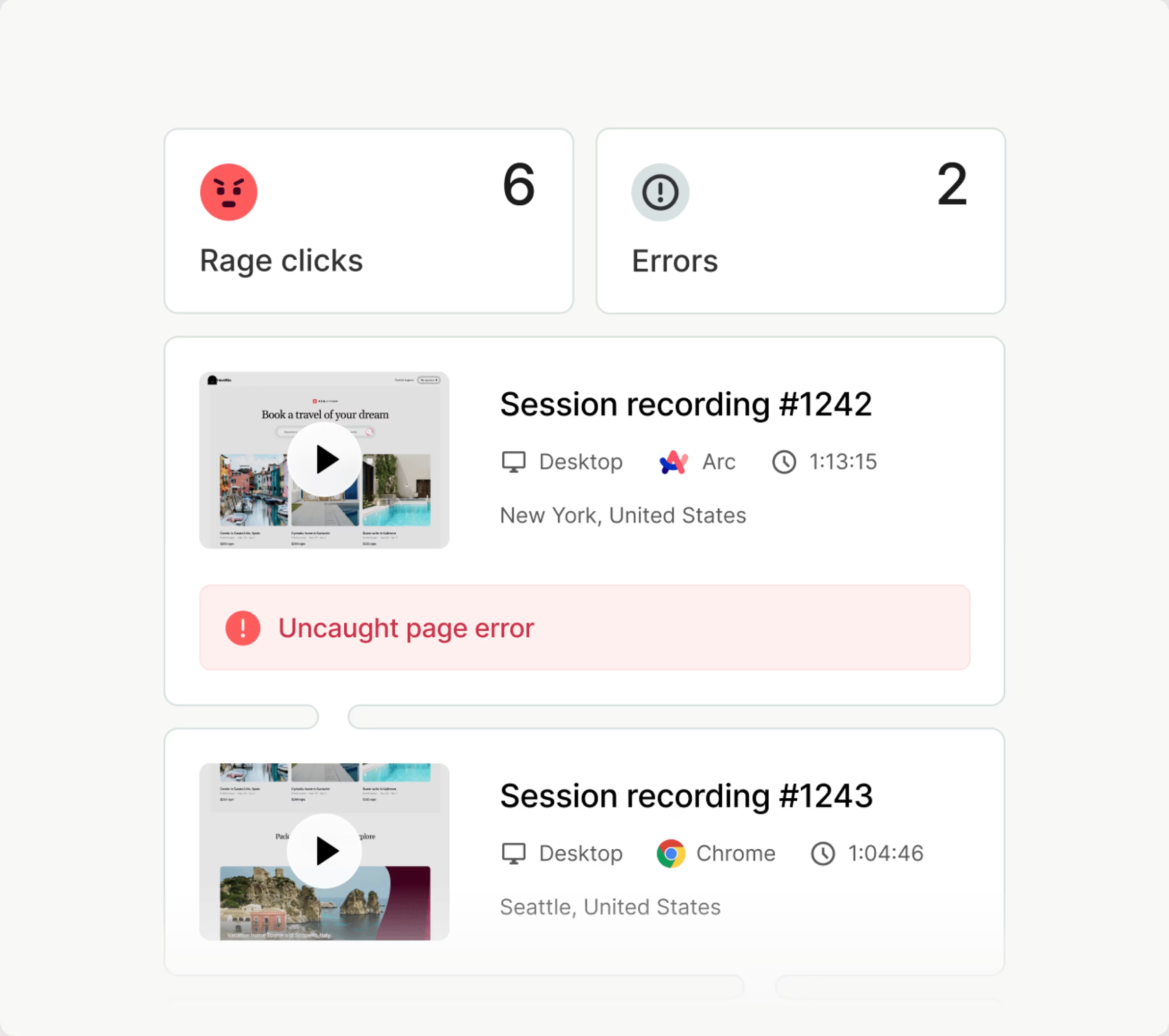

By layering in Session Replay with AI-assisted summaries, teams can also watch how users actually experience a page, catching hesitation moments or rage clicks that heatmaps surface at the aggregate level, and using those behavioral signals to inform more precise variant design.

![[Asset] Session replay summaries](http://images.ctfassets.net/gwbpo1m641r7/37Slb23dAdFsAgNItuUNPc/5ad533ecdc801e082aeef8bfaca324ce/sessionreplaysummary.webp?w=3840&q=100&fit=fill&fm=avif)

The AI Mapping Assistant removes another common bottleneck: manual tagging and configuration. Teams can simply describe what they want to group or track, and the assistant automatically generates the rules and conditions, dramatically reducing setup time so experiments can launch faster.

Audience segmentation and targeting

AI creates and adapts segments in real time based on current behavior, purchase history, device, referral source, and context. Propensity modeling predicts a user's likelihood to convert or churn, allowing you to target different test variants to users with varying propensities.

User Segmentation enables teams to create segments based on user actions or third-party data brought into the platform. You can compare how new signups, loyal customers, and casual users navigate your site or app, identify what each segment needs, and design test variants tailored to each group's actual journey. This works across devices and sessions, so teams are not limited to single-session snapshots.

![[Visual] Contentsquare user segmentation](http://images.ctfassets.net/gwbpo1m641r7/7dMLsLtzhcM3uFGYWG9yrj/44975fedf7d176b9561cc587abf26fd3/unnamed__54___1_.png?w=1080&q=100&fit=fill&fm=avif)

Segmentation and Behavior Analytics also lets teams filter by traffic source, device, campaign, and more to understand how different audiences interact and convert. It can detect subtle behavior patterns, such as users who compare pricing repeatedly, hesitate during checkout, or engage differently across devices. These patterns become the raw material for sharper, more meaningful test hypotheses rather than broad audience assumptions.

Smarter analysis and interpretation

Some advanced tools generate human-readable summaries of test results, explaining why a variant won or lost in plain language. AI analyzes the results of multiple past tests to identify overarching patterns that consistently drive positive outcomes across your platform. This is where the A/B Testing Workflow and Sense become especially valuable together. The A/B Testing Workflow creates a seamless, circular process: move directly from an insight to creating a corresponding experiment in your A/B testing tool, then instantly see results visualized back. Teams go from insight to experiment creation in minutes, not hours.

Sense Analyst, Contentsquare's autonomous AI agent, handles the heavy lifting of test analysis automatically. It summarizes test results, surfaces behavioral differences between variants, and recommends next steps, all without manual dashboard configuration. It can also compare user segments, journeys, or pages at scale, applying expert best practices to identify what drove the outcome and what the team should do next.

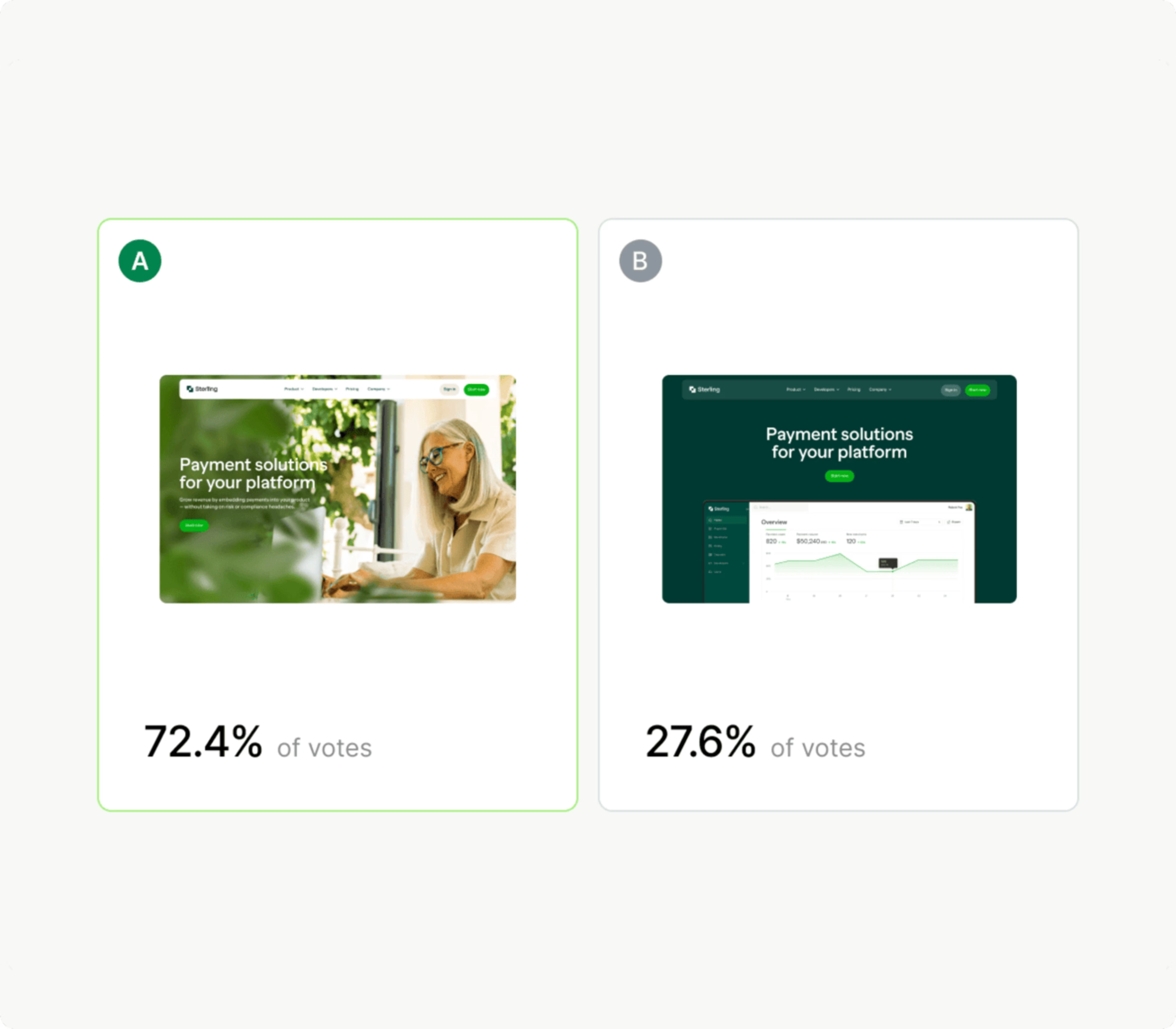

Page Comparator lets teams view control and variant snapshots side by side within the platform, seeing not just which variant won but exactly which zones, elements, and user interactions drove the difference. This makes it possible for marketers, product managers, and analysts to understand results without requiring deep statistical expertise.

![[Visual] Page Comparator](http://images.ctfassets.net/gwbpo1m641r7/v3yCoqgDH3UBjLUozkKyk/cd5652b033f8f51a1f2c2f0cfe1b0be5/image__71_.png?w=3840&q=100&fit=fill&fm=avif)

Predictive modeling for future optimization

Look beyond current tests to future opportunities. AI predicts the long-term revenue or engagement impact of implementing a winning variant to help you build strong business cases.

Impact Quantification supports this by tying behavioral and UX data directly to business KPIs like revenue, conversion, and bounce rate. Rather than defending optimization decisions based on lift percentages alone, teams can show stakeholders the concrete value of shipping a winning variant at scale.

As Contentsquare's platform evolves, Sense increasingly enables predictive querying: teams can ask questions about their data to anticipate where high-intent users are likely to drop off next, rather than waiting for conversion rates to decline. This moves experimentation from reactive to proactive, allowing teams to design tests that address emerging friction before it compounds.

The MCP Server extends this intelligence enabling the same core analytical capabilities to communicate with other AI agents and large language models. Teams can pull insights directly into tools like Claude, Slack, or Jira, combining behavioral data with broader workflow context to make faster, better-informed decisions about which experiments to run next.

![[Visual] Contentsquare's MCP: Bridging Agents and Experience Data](http://images.ctfassets.net/gwbpo1m641r7/DlgcvFon5os83CdNaML2E/0733ad4790b57851fc3921421b1197ba/unnamed.png?w=1920&q=100&fit=fill&fm=avif)

6 steps to implement AI for your A/B testing

Follow this structured approach to ensure an effective transition to AI-powered testing.

1. Define your objectives clearly

Identify your primary conversion metrics, such as purchase rate, sign-up rate, engagement time, or click-through rate. Pinpoint your current bottlenecks or areas of underperformance. Formulate well-defined hypotheses based on observed behavior, not just intuition.

Journey Analysis and Funnel Analysis are valuable starting points: they reveal where users are dropping off across key conversion paths, giving your hypotheses a behavioral foundation from the outset. You should also define how success connects to broader user experience goals, not just raw conversion metrics, to ensure your optimization efforts support long-term growth.

![[Visual] Journey-analysis](http://images.ctfassets.net/gwbpo1m641r7/6tPAZ9qTMoZxRFAefYrFOG/1d647b24e5c93831f0fb25cfd4bca9d7/Journey-analysis.png?w=3840&q=100&fit=fill&fm=avif)

2. Choose the right AI-powered A/B testing platform

Assess whether you need basic automated analysis or advanced features like generative AI for variants and predictive insights. Evaluate how the platform integrates with your existing analytics, CRM, and marketing automation tools.

Contentsquare integrates with 100+ certified, pre-built partner tools through its open API, and its A/B Testing Workflow connects directly to key experimentation platforms so insights flow seamlessly into test creation. When comparing A/B testing tools, look closely at usability, reporting depth, automation capabilities, and whether behavioral analytics can enrich your experimentation workflow end to end.

3. Ensure data quality

Clean, accurate, and consistent data is vital for AI. Address issues like missing values, duplicates, and incorrect formatting. Consolidate data sources from your website analytics, CRM, and marketing campaigns.

Contentsquare's autocapture functionality removes a major source of data gaps by automatically collecting behavioral data without requiring manual tagging. The AI Mapping Assistant further reduces configuration errors by automating page and element grouping. Ensure that all your conversion events and user interactions are correctly tracked and tagged. Your AI models are only as effective as the datasets behind them.

4. Design your AI-enhanced experiments

Use AI-generated insights, like anomaly detection and opportunity sizing, to refine your hypotheses. Use Zoning Analysis and Sense Analyst recommendations to create multiple layout, copy, or CTA variations grounded in real user behavior. Decide how AI will dynamically shift traffic to winning variants during the test.

Determine if AI will personalize variants based on real-time user behavior. Set clear primary and secondary metrics that the AI will track for success. This is also the right stage to define how Impact Quantification will evaluate variant performance in business terms, not just engagement lift.

5. Launch, monitor, and iterate

Deploy your experiment through your chosen platform. Allow the AI to continuously monitor the test, distribute traffic, and identify trends. You will still want human oversight early on.Act on early insights if Sense detects a clear winner or a poorly performing variant. AI-Powered Alerts will flag unexpected behavioral changes as they happen, such as a sudden spike in rage clicks or an anomalous drop in form completions, so your team can react before results are skewed. Use the learnings from each experiment to inform your next set of optimizations.

Sense Analyst can assist with monitoring by running automated periodic analyses across your site, synthesizing data across analytics and session replays on a scheduled basis, and surfacing what changed, what matters, and what you should do next, all without manual setup.

6. Analyze results and implement learnings

Review AI-generated reports to understand the reasons behind the results. Sense Analyst delivers A/B test summaries that surface behavioral differences between variants, explain what drove them, and recommend next steps in plain language. Use the Page Comparator to visualize control and variant side by side, and Session Replay Summaries to understand the human experience behind the numbers. Contentsquare can summarize up to 100 sessions at a time, saving analysts countless hours of video watching. Document your test hypotheses, variants, and results to build a knowledge base for your organization. Share insights across relevant teams to foster a culture of data-driven optimization.

![[Asset] Session replay summaries](http://images.ctfassets.net/gwbpo1m641r7/37Slb23dAdFsAgNItuUNPc/5ad533ecdc801e082aeef8bfaca324ce/sessionreplaysummary.webp?w=3840&q=100&fit=fill&fm=avif)

Overcoming challenges in AI-powered A/B testing

Integrating AI into A/B testing comes with hurdles. Being aware of these challenges helps you proactively address them. AI models are only as good as the data they are trained on. Poor quality, inconsistent, or insufficient data leads to flawed insights and inaccurate predictions. Autocapture and AI Mapping Assistant help teams build and maintain a clean, comprehensive behavioral data foundation without relying on fragile manual tagging.There is a misconception that AI completely replaces human optimizers. AI automates many tasks, but it requires human intelligence for strategic direction, creative input, ethical oversight, and interpreting nuanced results. The most effective programs combine machine efficiency with human judgment. Contentsquare's Sense is designed with this balance in mind: it removes the noise and the manual effort, but surfaces findings as inputs to human decision-making, not replacements for it.

It is also important to avoid treating AI as a black box. Sense shows which events and queries it used to reach a conclusion, and Sense Analyst makes its reasoning traceable, so teams can see what behavioral data informed a recommendation and where blind spots may exist. The organizations that build trust in their AI tools by maintaining transparency and human review will get the most durable results.

Real-world success with AI in A/B testing

⚡️ DPG Media used Contentsquare to increase their A/B test win rate by +22%

DPG Media (one of Europe's largest media companies) struggled to understand why A/B tests failed before using Contentsquare. With AI-powered behavioral analysis:

+22% higher win rate on tests using prior analysis vs. without

+6.6% increase in newspaper subscriptions

+7% increase in revenue

Thanks to Contentsquare, we were able to streamline our A/B testing processes, increasing efficiency and ensuring that our web optimization strategies are backed by data-driven decision-making.

The future of A/B testing

The journey of AI in A/B testing is just beginning. You can expect even more sophisticated applications in the coming years. Advanced machine learning algorithms will continue to evolve. Reinforcement learning enables systems to continuously learn and adapt in complex environments. Deep neural networks become even better at understanding subtle patterns in user behavior, sentiment, and visual preferences.

Hyper-personalization and dynamic content will become the standard. AI will dynamically generate entire sections of web pages or email campaigns in real time, tailored precisely to an individual user's current context. Contentsquare's continued investment in Sense, Sense Analyst, and conversational intelligence through its acquisition of Loris (which analyzes support interactions, chat, and voice data for sentiment and intent) signals a future where behavioral insights extend across every touchpoint in the customer journey, not just clicks and scrolls.

The MCP Server points to a broader integration vision: a world where Contentsquare's analytical capabilities are embedded across an organization's entire digital workflow, from Figma design environments to Jira backlogs to business intelligence dashboards, making behavioral insight available to every team at every decision point.We will also see more collaboration between human teams, embedded AI assistants like Sense, and autonomous agents like Sense Analyst that support experimentation at scale. As platforms mature, tighter connectivity will make it easier to activate insights across the full digital ecosystem, from analytics and personalization to campaign orchestration.

The result is a future where A/B testing becomes faster, more adaptive, and more deeply integrated into every digital decision. The organizations that embrace this AI-driven shift will be best positioned to understand customers, respond to changing behavior patterns, and deliver exceptional experiences at scale.

Final thoughts

AI is not just improving A/B testing, it is redefining it. By combining automation, predictive intelligence, personalization, and smarter analysis, AI helps teams move beyond slow and limited experimentation models. Whether you are optimizing a landing page, refining campaign messaging, or building more connected experimentation workflows, Contentsquare's Experience Intelligence platform provides the behavioral foundation every great experiment needs. From uncovering friction with Journey Analysis and Zoning Analysis, to prioritizing opportunities with Impact Quantification, to accelerating analysis with Sense Analyst, Contentsquare puts the right insights in the hands of every team, not just analytics specialists.With the right strategy, the right data, and the right human oversight, you can turn AI-powered experimentation into a durable competitive advantage.

Traditional A/B testing relies on manual hypothesis creation, static audience segmentation, and sequential testing that can take days or weeks to reach statistical significance. AI-powered A/B testing automates much of this process: it analyzes real user behavior to surface stronger hypotheses, dynamically reallocates traffic to winning variants in real time, and generates plain-language summaries of results without requiring deep statistical expertise. The result is a faster, more accurate, and more scalable experimentation program.

![[Visual] Website audit stock photo](http://images.ctfassets.net/gwbpo1m641r7/4XPc1coFszyXSEHRlsYDdF/2036b199e569a7116d83825d9c3876e4/6240756.jpg?w=3840&q=100&fit=fill&fm=avif)

![[Visual] Contentsquare's Content Team](http://images.ctfassets.net/gwbpo1m641r7/3IVEUbRzFIoC9mf5EJ2qHY/f25ccd2131dfd63f5c63b5b92cc4ba20/Copy_of_Copy_of_BLOG-icp-8117438.jpeg?w=1920&q=100&fit=fill&fm=avif)